pgEdge Control Plane Adds Supporting Services and Preview of Systemd Support

Most Postgres management tools ask you to pick a lane. You can manage databases, or you can manage the services around them. You can run in containers, or you can run on bare metal. You get one deployment model, one operational surface, one set of assumptions about how your infrastructure works.

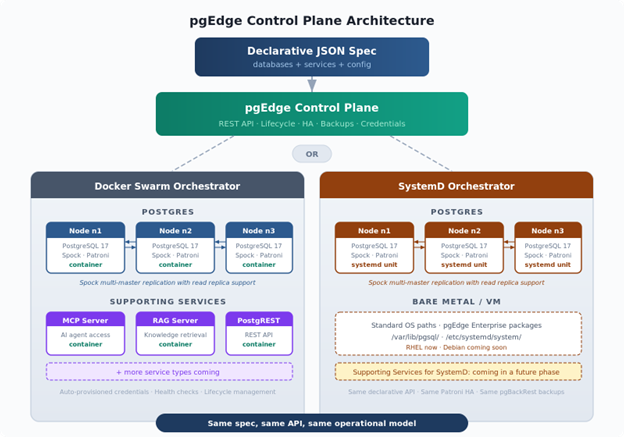

The pgEdge Control Plane just added two features that refuse to pick a lane: Supporting Services and systemd Support. Together, they push the Control Plane into territory that, as far as we can tell, nobody else in the Postgres world is covering. Supporting Services is fully available, while the systemd support is currently a Preview feature.

Supporting Services: More Than Just Postgres

Here's the thing about enterprise Postgres in 2026: the database is only part of the story. Your AI agents need an MCP server to talk to the data, your applications need a REST API to query it, and your knowledge base needs a RAG server to index and retrieve from it. These services aren't optional extras, they're what make the database useful in production.

Until now, you managed those services separately: different deployment pipelines, different configuration, different credentials, different monitoring. The database lived in one world and the services that depended on it lived in another, even though they're fundamentally coupled. When the database moves, the services need to follow, and when credentials rotate, every connected service needs to know about it. When you scale out, everything needs to come along for the ride.

Supporting Services in the Control Plane fixes this by treating the database and its surrounding services as a single declarative unit. You add a services array to the same JSON spec you already use for your database, and the Control Plane handles deployment, credential provisioning, health checking, and lifecycle management for everything together.

{

"id": "storefront",

"spec": {

"database_name": "storefront",

"database_users": [

{

"username": "admin",

"password": "...",

"db_owner": true,

"attributes": ["LOGIN", "SUPERUSER"]

},

{

"username": "web_anon",

"attributes": ["NOLOGIN"]

}

],

"nodes": [

{ "name": "n1", "host_ids": ["us-east-1"] },

{ "name": "n2", "host_ids": ["eu-west-1"] }

],

"scripts": {

"post_database_create": [

"GRANT USAGE ON SCHEMA public TO web_anon",

"ALTER DEFAULT PRIVILEGES FOR ROLE admin GRANT SELECT ON TABLES TO web_anon",

"ALTER DEFAULT PRIVILEGES FOR ROLE admin GRANT SELECT ON SEQUENCES TO web_anon"

]

},

"services": [

{

"service_id": "ai-mcp",

"service_type": "mcp",

"version": "latest",

"host_ids": ["us-east-1"],

"connect_as": "admin",

"port": 8080,

"config": {

"llm_enabled": true,

"llm_provider": "anthropic",

"llm_model": "claude-sonnet-4-6",

"anthropic_api_key": "sk-ant-...",

"init_token": "my-bootstrap-token"

}

},

{

"service_id": "rest-api",

"service_type": "postgrest",

"version": "latest",

"host_ids": ["us-east-1", "eu-west-1"],

"connect_as": "admin",

"port": 8081,

"config": {

"db_schemas": "public",

"db_anon_role": "web_anon",

"max_rows": 1000

}

}

]

}

}

That's a two-node distributed database with Spock multi-master replication, an MCP server for AI agent access on the US node, and PostgREST instances on both nodes for REST API coverage. One spec, one POST, and the Control Plane builds the whole thing. Each service instance gets its own automatically provisioned database credentials (read-only by default, read-write when the service needs it), and those credentials are scoped, rotated, and revoked by the Control Plane without you touching them.

What You Can Deploy Today

The beta launches with three service types, and the framework is designed to expand. These are the first three, not the last three.

pgEdge Postgres MCP Server connects AI agents and LLM-powered applications to your database. Configure it with your preferred LLM provider (Anthropic, OpenAI, or self-hosted Ollama), and your agents get tools for querying data, inspecting schemas, running EXPLAIN plans, and performing vector similarity searches. You can run it as a pure tool server (where the connecting client supplies its own LLM) or enable the built-in LLM proxy for direct HTTP chat. The MCP server supports fine-grained control over which tools are exposed and whether write access is permitted, so you can give AI agents exactly the access they need and nothing more.

pgEdge RAG Server enables retrieval-augmented generation workflows using your database as a knowledge store. Configure multiple pipelines, each targeting specific tables with their own embedding models and search tuning. Point it at your documentation tables, your support ticket history, your product catalog, and the RAG server handles chunking, embedding, and retrieval. It works with Anthropic, OpenAI, Voyage, and Ollama for embeddings, so air-gapped deployments with local models are fully supported.

PostgREST automatically generates a REST API from your PostgreSQL schema. No backend code, no endpoint definitions, no ORM configuration. PostgREST reads your schema, respects your row-level security policies, and exposes your tables and views as RESTful endpoints. The Control Plane handles JWT configuration, connection pooling, and CORS settings through the same declarative config.

Deployment Flexibility

Services are independent of your database node topology. You can co-locate a service on the same host as a database node to minimize latency, run it on a dedicated host to isolate the workload, or deploy multiple instances across hosts for redundancy. The database_connection block in the service spec lets you control exactly which database node each service connects to and whether it targets the primary or a standby, so you can point read-heavy services at replicas and write-heavy services at the primary without any manual connection string management.

systemd Support: Your Hosts, Your Way

The other half of this release is a Preview feature that tackles a different constraint entirely, one that's been blocking a whole segment of enterprise customers from adopting the Control Plane. The Control Plane has required Docker Swarm since day one, and for many teams that's fine. But for production database teams in regulated industries (financial services, healthcare, government), containers on database hosts could be a non-starter. Their security teams may not approve of Docker. Their operational runbooks assume standard deployments of system package managers and services. Their monitoring, their backup scripts, their muscle memory all assume Postgres is a service on a host, not a process inside a container.

These are exactly the kinds of customers who need what the Control Plane offers (declarative management, automated HA, backup scheduling, rolling upgrades), but they've been locked out by the container requirement. systemd support removes that lock.

The same declarative API, the same Patroni-based high availability, the same backup/restore integration, but without Docker anywhere in the picture. Your databases and the Control Plane server run as systemd units on standard OS file paths. The entire stack is standard Linux processes that any sysadmin or security auditor already understands. The RHEL beta is available now, with Debian support to follow shortly.

What This Means in Practice

The Control Plane discovers what's installed on the host (Postgres versions, extensions, existing data directories) and works with what's there. Standard OS file locations (/var/lib/pgsql/, /etc/systemd/system/) mean your existing monitoring, log aggregation, and backup tooling continues to work without reconfiguration.

The API is identical regardless of your orchestrator, and that's the important part. Whether your Control Plane is orchestrating Docker Swarm services or systemd units, the endpoints don't change. The same spec that creates a distributed database on containers creates one directly on the host. Your automation, your CI/CD pipelines, your infrastructure-as-code workflows don't care which orchestrator is running underneath. You pick the deployment model that fits your environment, and the Control Plane adapts.

This also opens the door for existing pgEdge customers that run directly on the host to migrate to the Control Plane without changing their deployment model. If you're running pgEdge Distributed Postgres on bare-metal hosts today, the systemd orchestrator meets you exactly where you are: same hosts, same packages, same file locations, with the Control Plane's declarative API and operational tooling layered on top.

The Bigger Picture

Take a step back and look at what the Control Plane now covers, because the full picture is worth seeing. You can start with a single-node Postgres database and scale to multi-master distributed Postgres with Spock replication, without re-platforming. You can deploy AI services (MCP, RAG) and data access services (PostgREST) alongside your database, managed as a unit through the same declarative spec. You can run all of it in containers via Docker Swarm or on bare metal via systemd, with the same API either way. And the whole thing is built on sensible defaults that get you running fast while leaving every knob exposed when you need to tune.

We went looking for another Postgres vendor doing all of this from a single declarative API, and we couldn't find one. There are enterprise management platforms, and there are services platforms, and there are distributed database platforms. But we haven't found anyone else combining single-to-distributed progression, AI and data access services, and bare-metal-to-container deployment flexibility in a single declarative model with sensible defaults.

The Control Plane goes further than anything else we've seen in the Postgres world: one spec, one API, one operational surface for everything. The database, the services, the deployment model, and the operational lifecycle, all declared together and managed together. That's the territory we're pushing into, and as far as we can tell, it's uncharted.

The Supporting Services framework is designed to grow. MCP, RAG, and PostgREST are the first three service types, but the architecture is built for expansion. Connection poolers, monitoring agents, and other tools that belong alongside an enterprise database are all candidates. The pattern is the same: add a service to your spec, the Control Plane handles the rest.

Try Out The New Features

Both features are available now in the latest Control Plane Release. Supporting Services works with the Docker Swarm orchestrator today. The systemd support preview is available for RHEL, with Debian coming soon.

Get started: